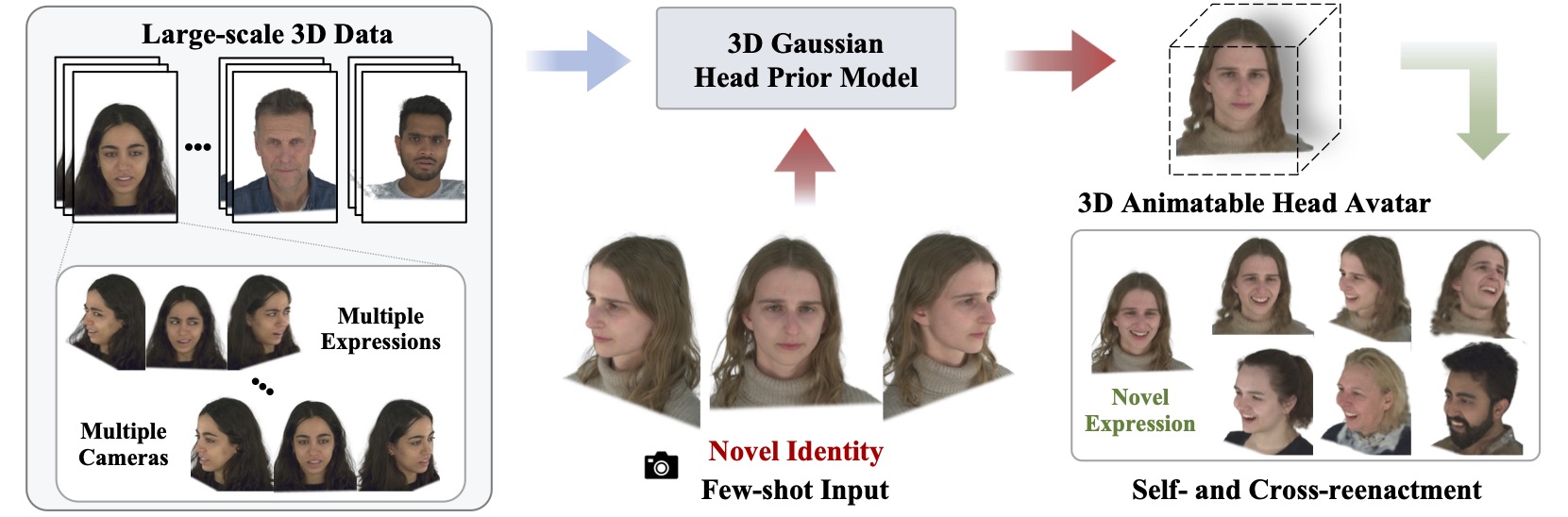

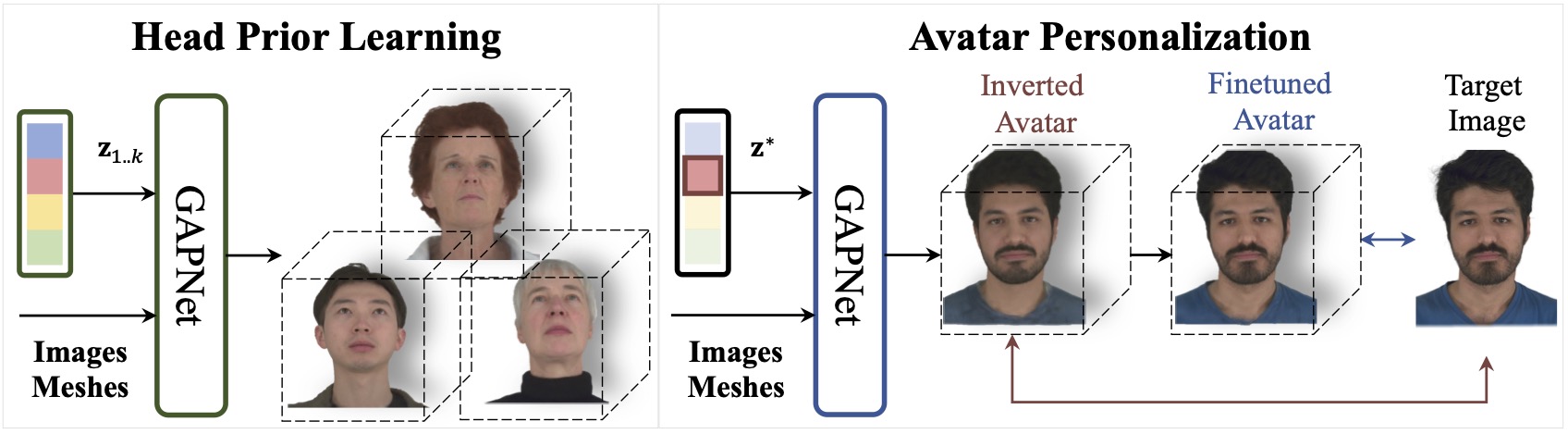

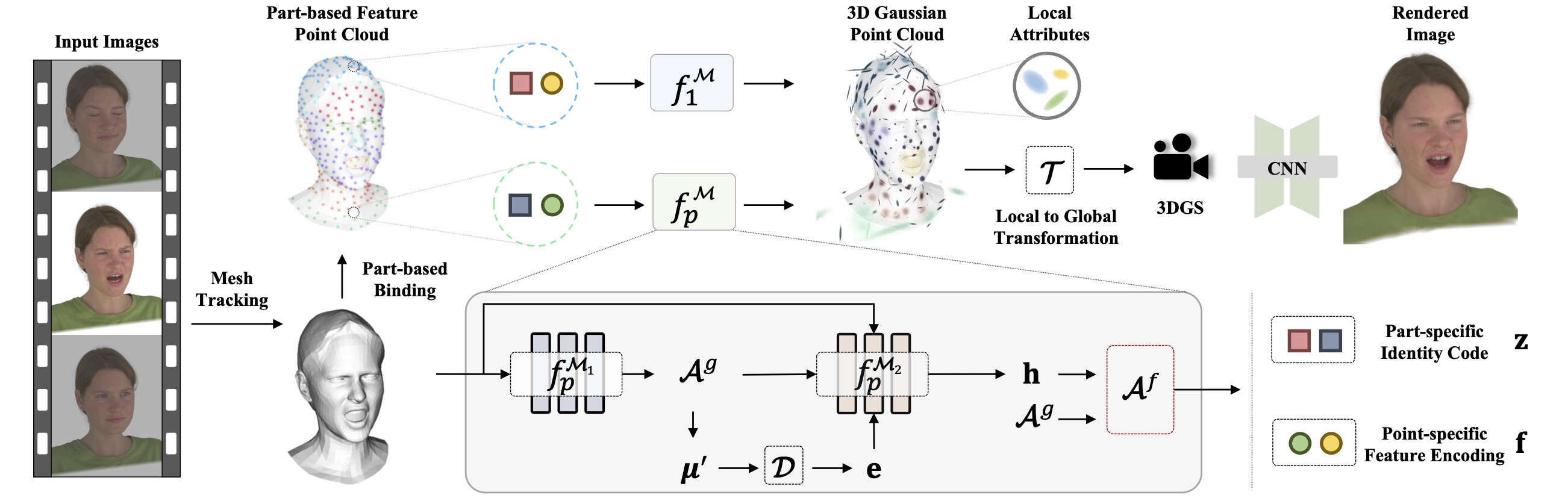

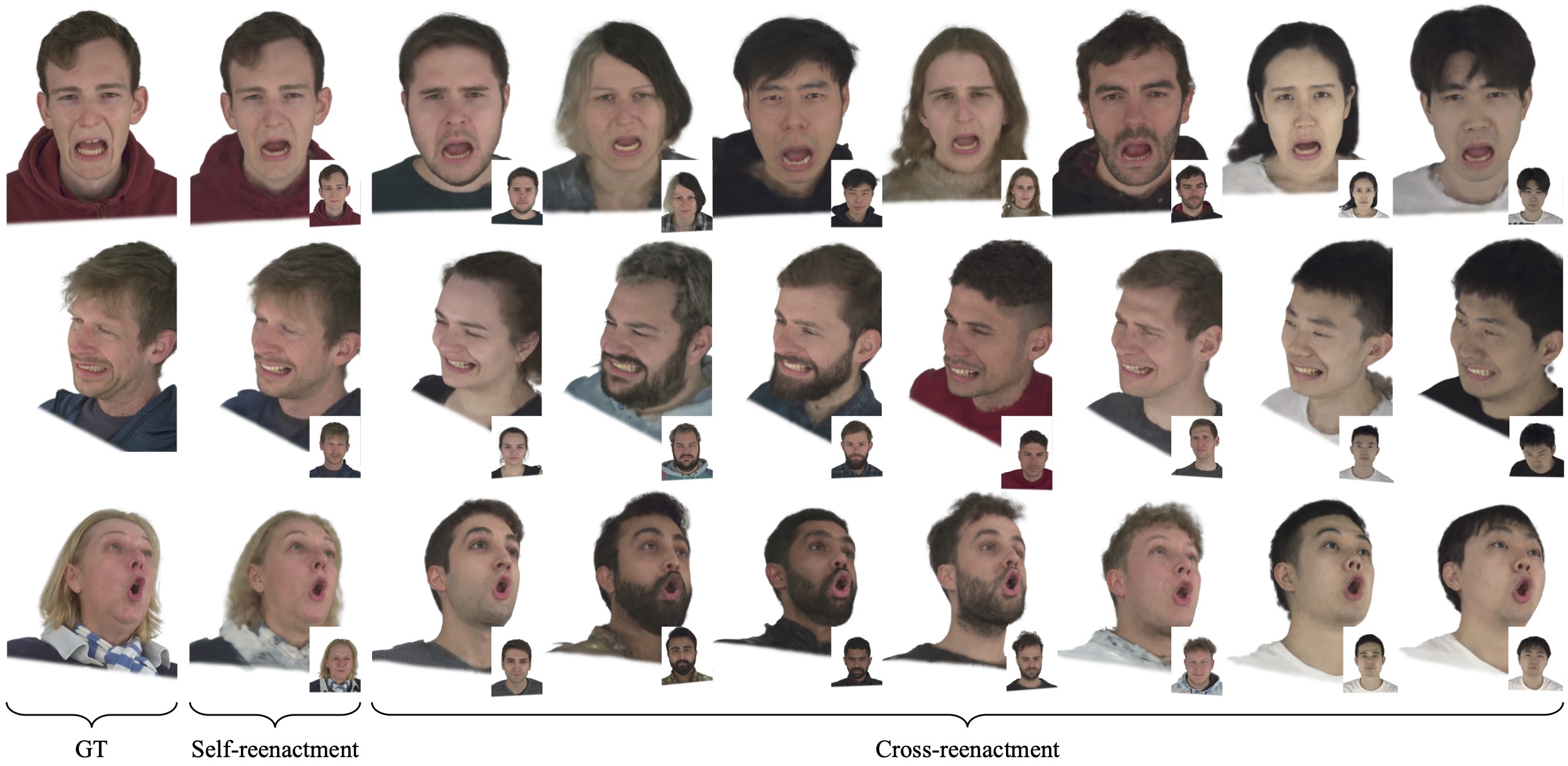

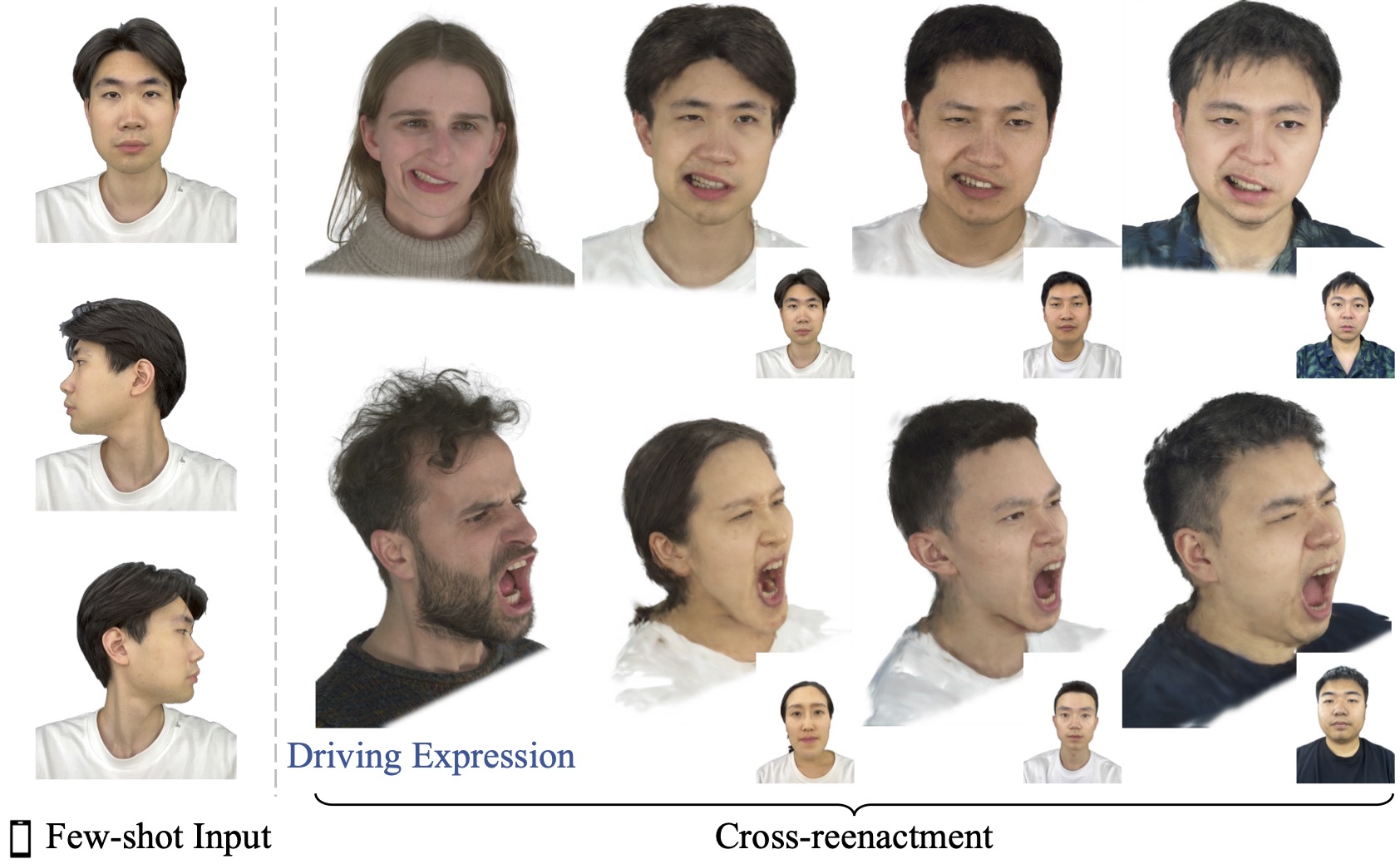

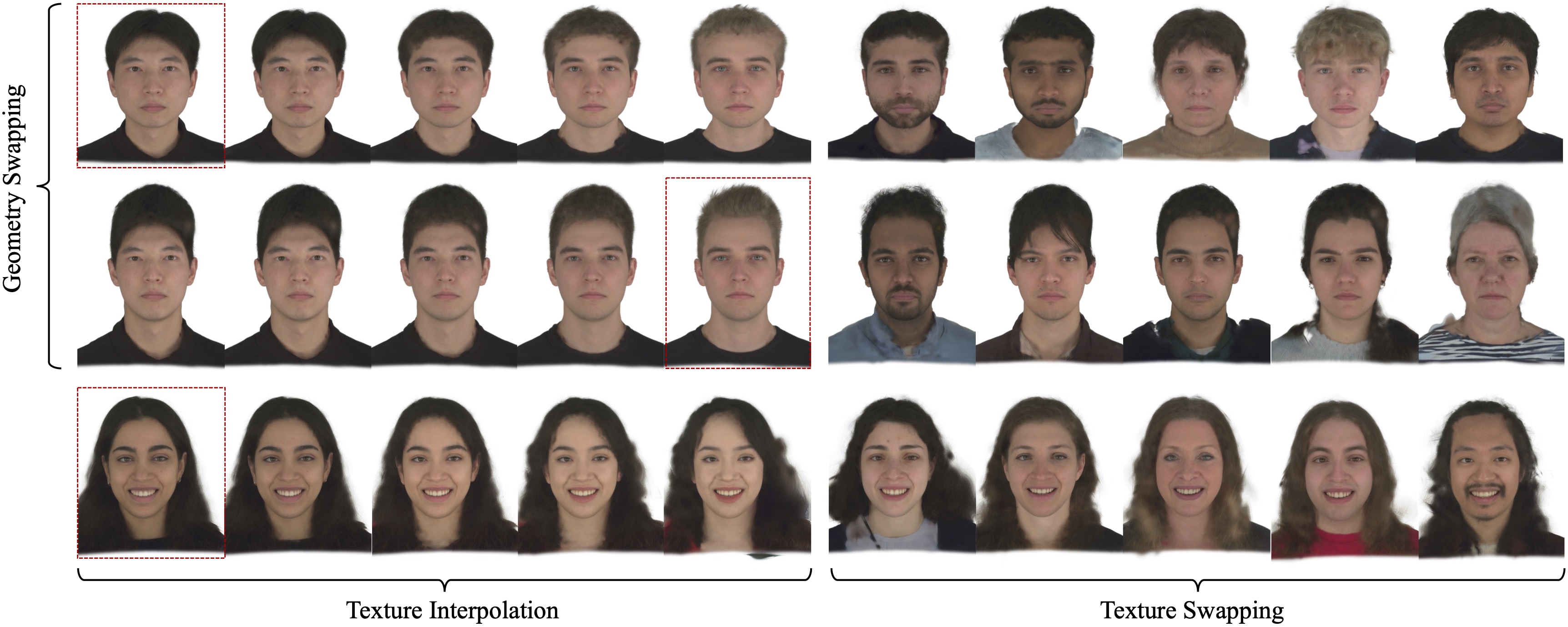

In this paper, we present a novel 3D head avatar creation approach capable of generalizing from few-shot in-the-wild data with high-fidelity and animatable robustness. Given the underconstrained nature of this problem, incorporating prior knowledge is essential. Therefore, we propose a framework comprising prior learning and avatar creation phases. The prior learning phase leverages 3D head priors derived from a large-scale multi-view dynamic dataset, and the avatar creation phase applies these priors for few-shot personalization. Our approach effectively captures these priors by utilizing a Gaussian Splatting-based auto-decoder network with part-based dynamic modeling. Our method employs identity-shared encoding with personalized latent codes for individual identities to learn the attributes of Gaussian primitives. During the avatar creation phase, we achieve fast head avatar personalization by leveraging inversion and fine-tuning strategies. Extensive experiments demonstrate that our model effectively exploits head priors and successfully generalizes them to few-shot personalization, achieving photo-realistic rendering quality, multi-view consistency, and stable animation.

@article{zheng2024headgap,

title={HeadGAP: Few-shot 3D Head Avatar via Generalizable Gaussian Priors},

author={Zheng, Xiaozheng and Wen, Chao and Li, Zhaohu and Zhang, Weiyi and Su, Zhuo and Chang, Xu and Zhao, Yang and Lv, Zheng and Zhang, Xiaoyuan and Zhang, Yongjie and Wang, Guidong and Xu Lan},

journal={arXiv preprint arXiv:2408.06019},

year={2024}

}